Advanced AI Concepts: Deep Dive into NLP and Computer Vision

Advanced AI Concepts: Deep Dive into NLP and Computer Vision

The landscape of Artificial Intelligence is continuously evolving, with Natural Language Processing (NLP) and Computer Vision standing out as two of its most transformative branches. This article offers an advanced AI concepts deep dive into NLP and Computer Vision, exploring their intricate workings, groundbreaking applications, and the challenges that define their current frontiers. Understanding these fields is crucial for anyone looking to grasp the cutting-edge of AI, from developing intelligent systems to interpreting complex data. We aim to provide a comprehensive overview, highlighting how these technologies are not just theoretical constructs but practical tools reshaping industries worldwide.

Key Points:

- NLP Fundamentals: Understanding how machines process, interpret, and generate human language.

- Computer Vision Essentials: Exploring how AI enables computers to "see" and interpret visual data.

- Advanced Techniques: Delving into transformer models, generative adversarial networks (GANs), and multimodal AI.

- Real-World Impact: Examining applications in healthcare, autonomous systems, and personalized experiences.

- Future Outlook & Challenges: Discussing ethical considerations, data bias, and the path forward for these dynamic fields.

Unpacking Natural Language Processing (NLP): Beyond Basic Text Analysis

Natural Language Processing (NLP) is a cornerstone of advanced AI concepts, enabling computers to understand, interpret, and generate human language. Its evolution has moved far beyond simple keyword matching to sophisticated semantic understanding and context-aware interactions. Modern NLP models, particularly those leveraging deep learning, can discern nuances in language that were once thought to be exclusively human capabilities. This deep dive into NLP reveals how these systems power everything from virtual assistants to complex data analysis tools.

The Rise of Transformer Models in NLP

The introduction of transformer models has revolutionized NLP, pushing the boundaries of what's possible in language understanding and generation. These architectures, like BERT, GPT, and T5, utilize self-attention mechanisms to weigh the importance of different words in a sentence, capturing long-range dependencies more effectively than previous recurrent neural networks (RNNs). This capability allows for a much richer contextual understanding, leading to significant improvements in tasks such as machine translation, text summarization, and sentiment analysis. The ability to process entire sequences in parallel has also dramatically increased training efficiency.

Advanced NLP Applications and Their Impact

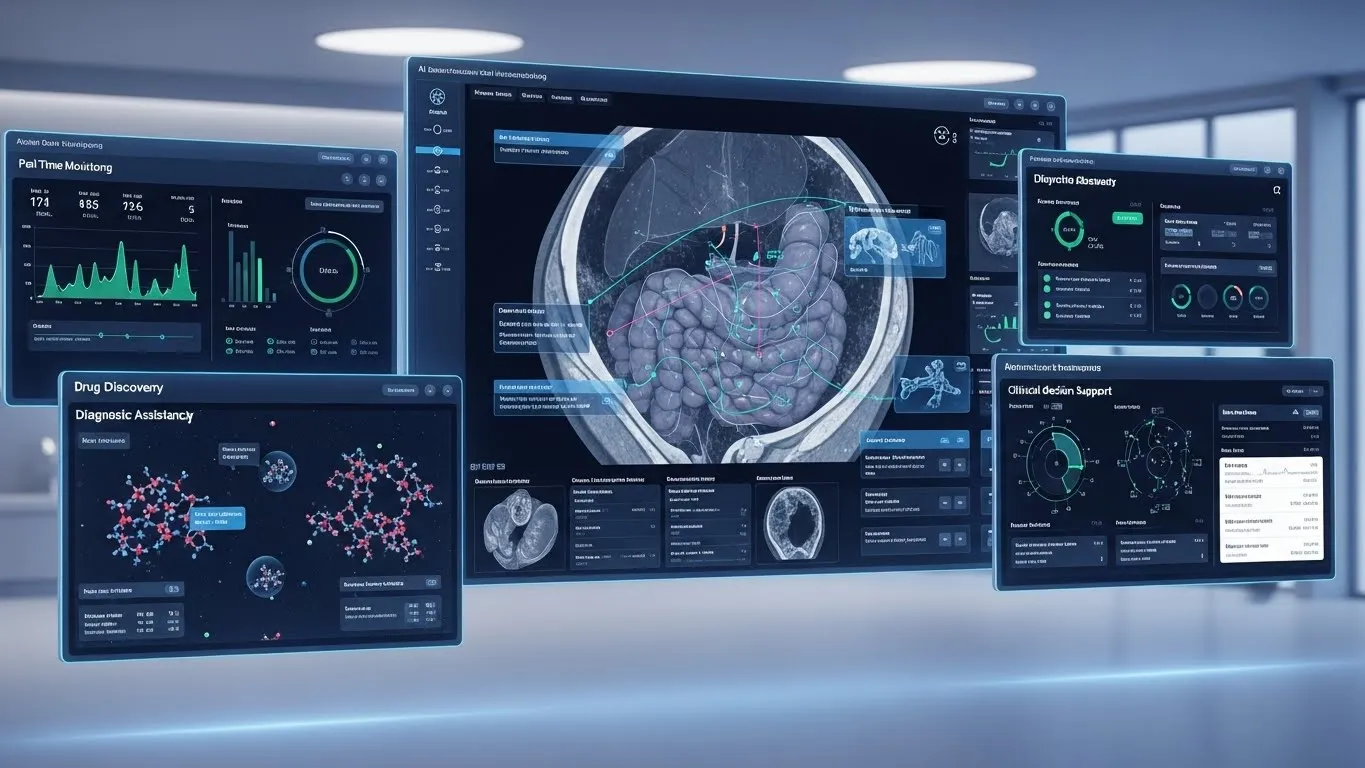

The practical applications of advanced NLP are vast and continue to expand. In healthcare, NLP systems analyze vast amounts of medical literature and patient records to assist in diagnosis and drug discovery. For instance, a recent study published in Nature Medicine (2024) highlighted how NLP models could identify subtle patterns in clinical notes, leading to earlier detection of certain chronic diseases. In customer service, sophisticated chatbots and virtual assistants powered by NLP provide personalized support, understanding complex queries and offering relevant solutions. Furthermore, in the realm of AI recommendation and personalization systems, NLP plays a critical role in understanding user preferences from text interactions, reviews, and search queries, leading to highly tailored content suggestions.

Decoding Computer Vision: Enabling Machines to See and Understand

Computer Vision is another pivotal area within advanced AI concepts, focusing on enabling computers to derive meaningful information from digital images and videos. This field has transformed how we interact with the physical world, allowing machines to perceive, process, and interpret visual data in ways that mimic human sight. From recognizing faces to navigating autonomous vehicles, the advancements in computer vision are profound and far-reaching.

Deep Learning Architectures for Visual Perception

At the heart of modern Computer Vision are deep learning architectures, particularly Convolutional Neural Networks (CNNs). CNNs excel at identifying hierarchical patterns in visual data, from edges and textures to complex objects and scenes. Beyond CNNs, advanced architectures like Recurrent Neural Networks (RNNs) are used for video analysis, understanding sequences of images over time. More recently, Vision Transformers (ViT) have adapted the transformer architecture, initially successful in NLP, to achieve state-of-the-art results in image recognition tasks, demonstrating the cross-pollination of ideas across AI subfields.

Cutting-Edge Computer Vision Applications

The impact of advanced Computer Vision is evident across numerous sectors. In autonomous driving, computer vision systems are critical for real-time object detection, lane keeping, and pedestrian recognition, ensuring safety and navigation. In manufacturing, these systems perform quality control, identifying defects with unparalleled precision and speed. Security and surveillance benefit immensely from facial recognition and anomaly detection capabilities. A report by MIT Technology Review (2023) emphasized the growing reliance on computer vision in smart city initiatives, from traffic management to public safety monitoring. For more insights into how these visual systems are integrated into broader intelligent frameworks, readers can explore related articles on AI-powered surveillance.

The Convergence of NLP and Computer Vision: Multimodal AI

One of the most exciting advanced AI concepts emerging today is multimodal AI, which combines the strengths of NLP and Computer Vision. This approach allows AI systems to process and understand information from multiple modalities simultaneously, such as text, images, and audio. The goal is to create AI that can interpret the world more holistically, much like humans do.

Bridging the Gap with Multimodal Models

Multimodal models are designed to learn representations that capture the relationships between different data types. For example, a model might be trained to understand an image based on its textual description, or to generate a caption for an image. Techniques like cross-modal attention enable these models to focus on relevant parts of one modality while processing another. This integration is crucial for tasks requiring a comprehensive understanding of context, such as visual question answering or generating descriptive narratives for videos.

Real-World Multimodal AI Scenarios

The applications of multimodal AI are rapidly expanding. In content creation, AI can generate compelling stories by combining visual elements with descriptive text. For accessibility, multimodal systems can describe images to visually impaired users or translate sign language into spoken words. In robotics, combining visual perception with natural language commands allows robots to understand complex instructions and interact more naturally with their environment. The potential for these integrated systems to enhance user experience in AI recommendation and personalization systems is immense, offering recommendations based on a richer understanding of user context from both their visual and textual interactions.

Challenges and Ethical Considerations in Advanced AI

While the progress in advanced AI concepts like NLP and Computer Vision is remarkable, significant challenges and ethical considerations remain. Addressing these issues is paramount for the responsible development and deployment of AI technologies.

Data Bias and Fairness

One of the most pressing concerns is data bias. AI models are only as good as the data they are trained on. If training datasets contain biases – whether historical, societal, or demographic – the models will learn and perpetuate these biases. This can lead to unfair or discriminatory outcomes in applications like facial recognition, hiring algorithms, or loan approvals. Ensuring diverse and representative datasets is a continuous challenge. A white paper by the AI Ethics Institute (2025) highlighted the critical need for transparent data auditing practices to mitigate these biases.

Privacy and Security Implications

The widespread use of NLP and Computer Vision also raises significant privacy and security concerns. Facial recognition technology, for example, can be used for mass surveillance, potentially infringing on individual liberties. Similarly, NLP models processing sensitive personal data require robust security measures to prevent breaches. Balancing the benefits of these technologies with the imperative to protect individual privacy is a complex ethical tightrope. Developing privacy-preserving AI techniques, such as federated learning, is an active area of research.

Explainability and Transparency

As AI models become more complex, their decision-making processes can become opaque, leading to the "black box" problem. Explainable AI (XAI) is an emerging field dedicated to making AI models more transparent and interpretable, allowing humans to understand why a model made a particular decision. This is especially critical in high-stakes applications like medical diagnosis or autonomous vehicle control, where trust and accountability are paramount.

The Future Landscape of NLP and Computer Vision

The future of advanced AI concepts in NLP and Computer Vision promises even more groundbreaking innovations. We can anticipate further integration, enhanced capabilities, and a broader societal impact.

Generative AI and Foundation Models

The emergence of generative AI and foundation models is set to redefine both fields. Large language models (LLMs) are already demonstrating incredible capabilities in generating human-like text, while generative adversarial networks (GANs) and diffusion models are creating realistic images and videos. These models, often trained on vast amounts of data, can be adapted to a wide range of downstream tasks, paving the way for more versatile and powerful AI systems. The ability of these models to synthesize novel content opens new avenues for creativity and problem-solving.

Edge AI and Real-time Processing

Another significant trend is the shift towards Edge AI, where AI computations are performed closer to the data source, rather than in centralized cloud servers. This enables real-time processing, reduces latency, and enhances privacy, which is crucial for applications like autonomous vehicles, smart cameras, and wearable devices. The optimization of NLP and Computer Vision models for resource-constrained environments will be a key area of development.

Human-AI Collaboration

The future will also see a greater emphasis on human-AI collaboration. Instead of AI replacing human intelligence, the focus will be on creating synergistic systems where AI augments human capabilities. This could involve AI tools assisting designers, doctors, or educators, allowing them to perform their tasks more efficiently and effectively. For further reading on the symbiotic relationship between humans and intelligent systems, explore our content on human-in-the-loop AI.

Conclusion: Shaping the Future with Advanced AI

Our advanced AI concepts deep dive into NLP and Computer Vision reveals fields brimming with innovation and potential. From understanding the nuances of human language to interpreting the complexities of the visual world, these technologies are not just advancing; they are converging to create more intelligent, adaptive, and context-aware AI systems. While challenges related to bias, privacy, and explainability demand careful attention, the ongoing research and development promise a future where AI continues to unlock unprecedented capabilities.

The journey into advanced AI is continuous, offering endless opportunities for exploration and application. We encourage you to engage with these concepts, contribute to their development, and consider their ethical implications. Share your thoughts in the comments below, or subscribe to our newsletter for the latest updates on AI advancements. For those interested in expanding their knowledge, consider exploring articles on the ethical frameworks for AI development or the latest breakthroughs in multimodal machine learning.

FAQ Section

Q1: What are the primary differences between NLP and Computer Vision?

A1: NLP focuses on enabling computers to understand, interpret, and generate human language, whether spoken or written. It deals with text analysis, sentiment recognition, and machine translation. Computer Vision, on the other hand, empowers machines to "see" and interpret visual data from images and videos, performing tasks like object detection, facial recognition, and image classification. While distinct, they increasingly converge in multimodal AI.

Q2: How do transformer models enhance NLP and Computer Vision?

A2: Transformer models, originally developed for NLP, significantly enhance both fields by using self-attention mechanisms. In NLP, they allow models to weigh different words in a sentence, capturing context more effectively. In Computer Vision, Vision Transformers (ViT) adapt this mechanism to process image patches, achieving state-of-the-art results in image recognition by understanding global relationships within an image, rather than just local features.

Q3: What are some ethical concerns associated with advanced AI concepts?

A3: Key ethical concerns include data bias, where AI models perpetuate societal biases from their training data, leading to unfair outcomes. Privacy is another major issue, especially with technologies like facial recognition and the processing of sensitive personal data. The "black box"